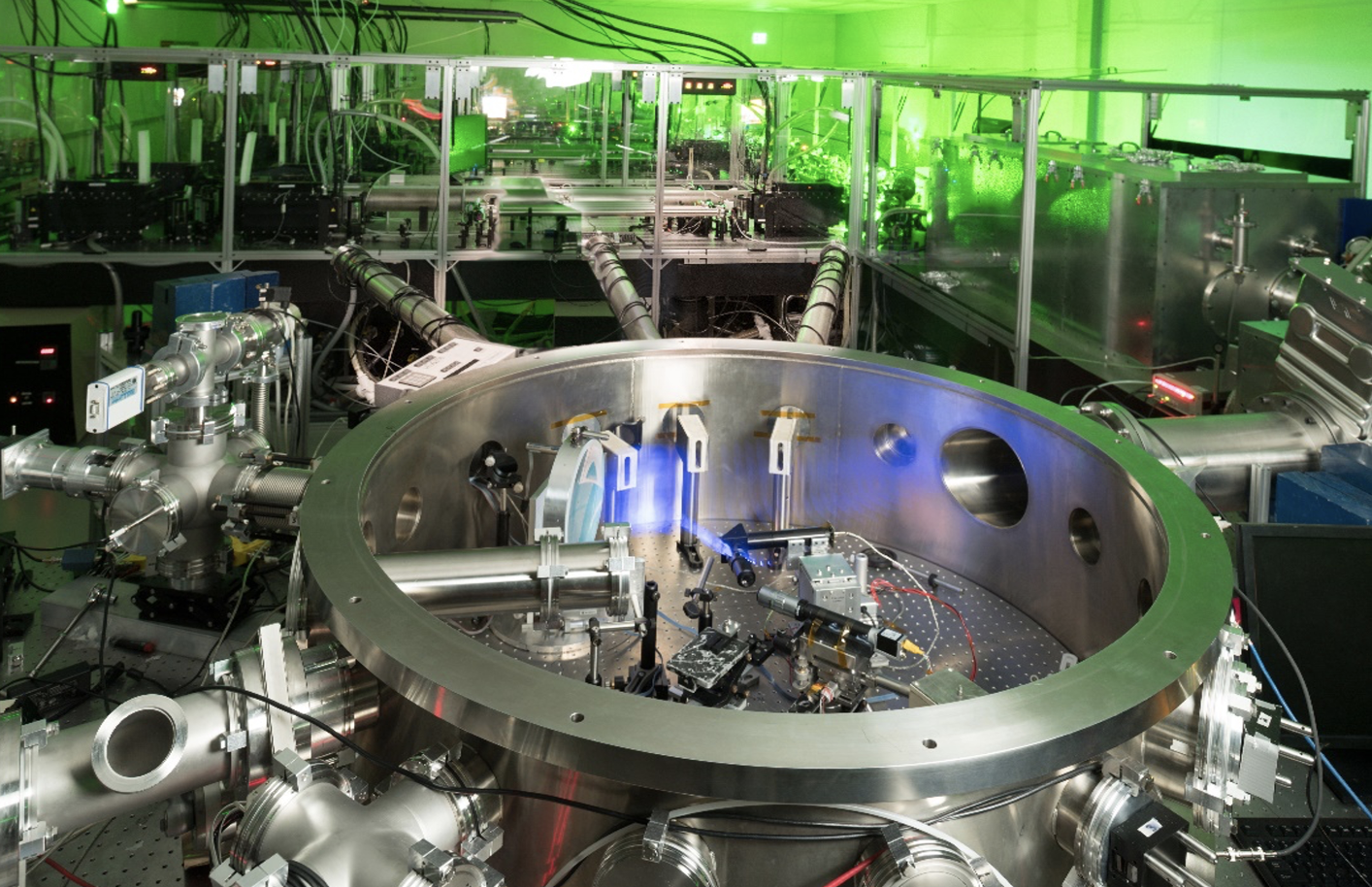

HEDSA

High Energy density science association

Latest News

About Us

HEDSA was formed in 2005 to provide an organization to enable University and Small Business scientists who are working on high energy density research to:

- Advocate a broad-based High Energy Density Science research program based in universities and small businesses as well as in national laboratories and large companies.

- Advocate new initiatives to maintain the health of high energy density science and the related workforce in the United States, including the formation of a High Energy Density Science Users Program.

- Facilitate increased collaboration and communication opportunities among its members.

- Provide, in addition, a Point of Contact (individual) through whom university and small business high energy density research scientists can communicate with government agencies responsible for sponsoring such research in order to facilitate the flow of information in both directions.

Steering committee

Heath LeFevre

Secretary

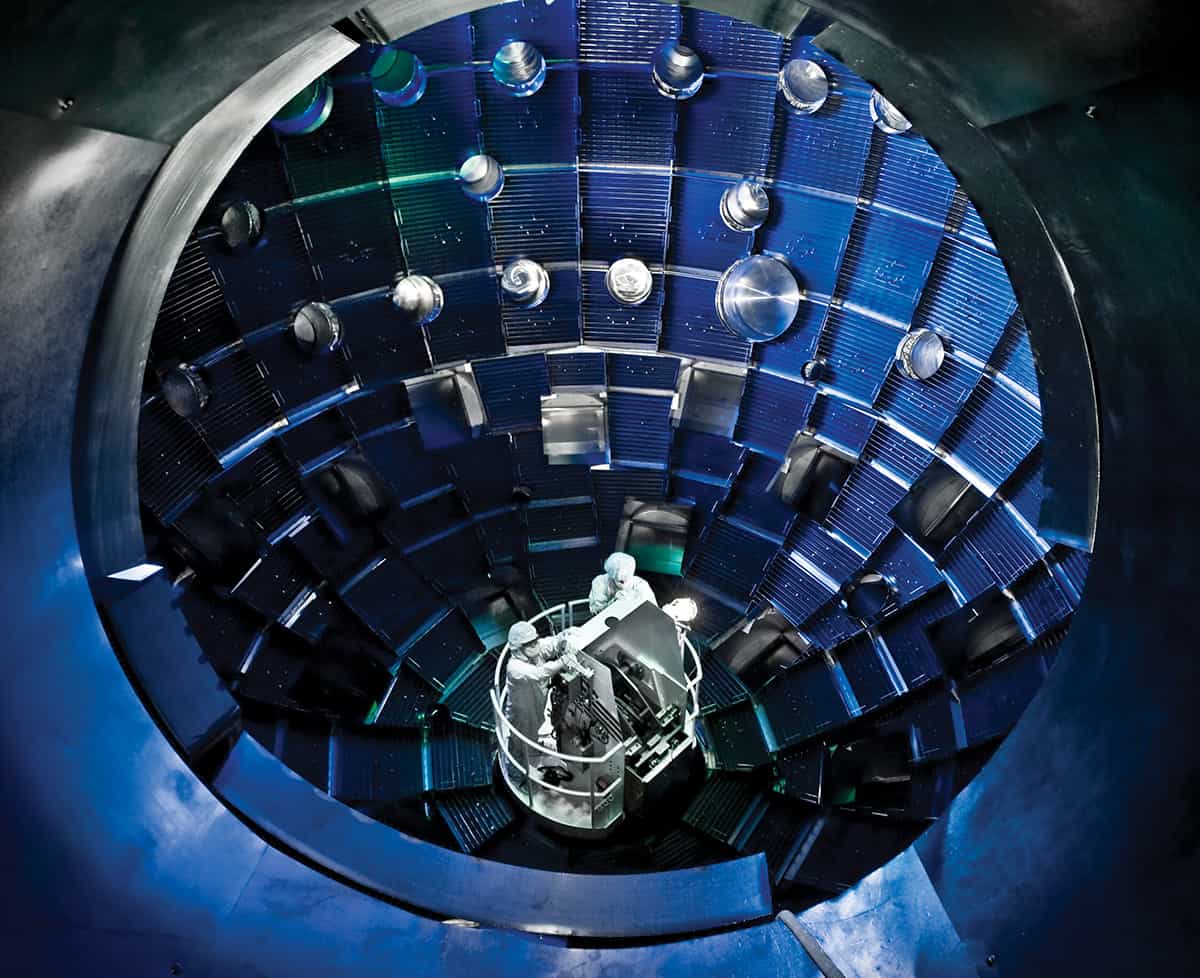

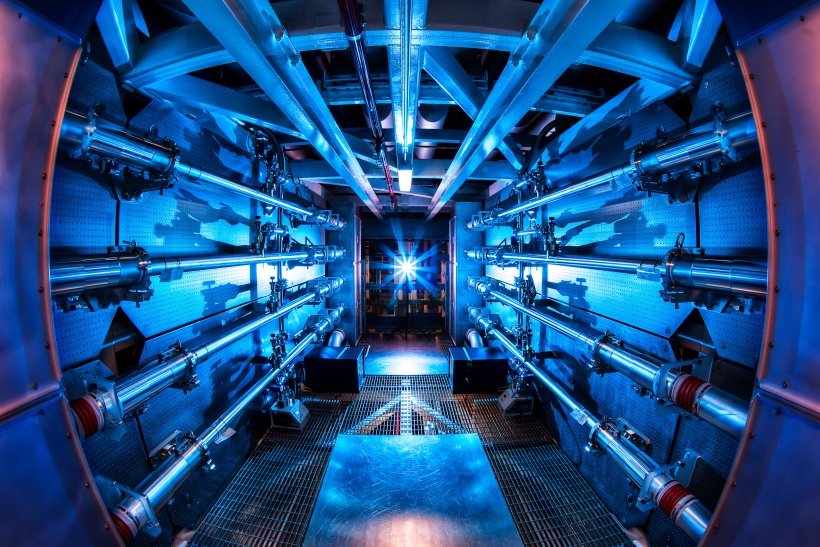

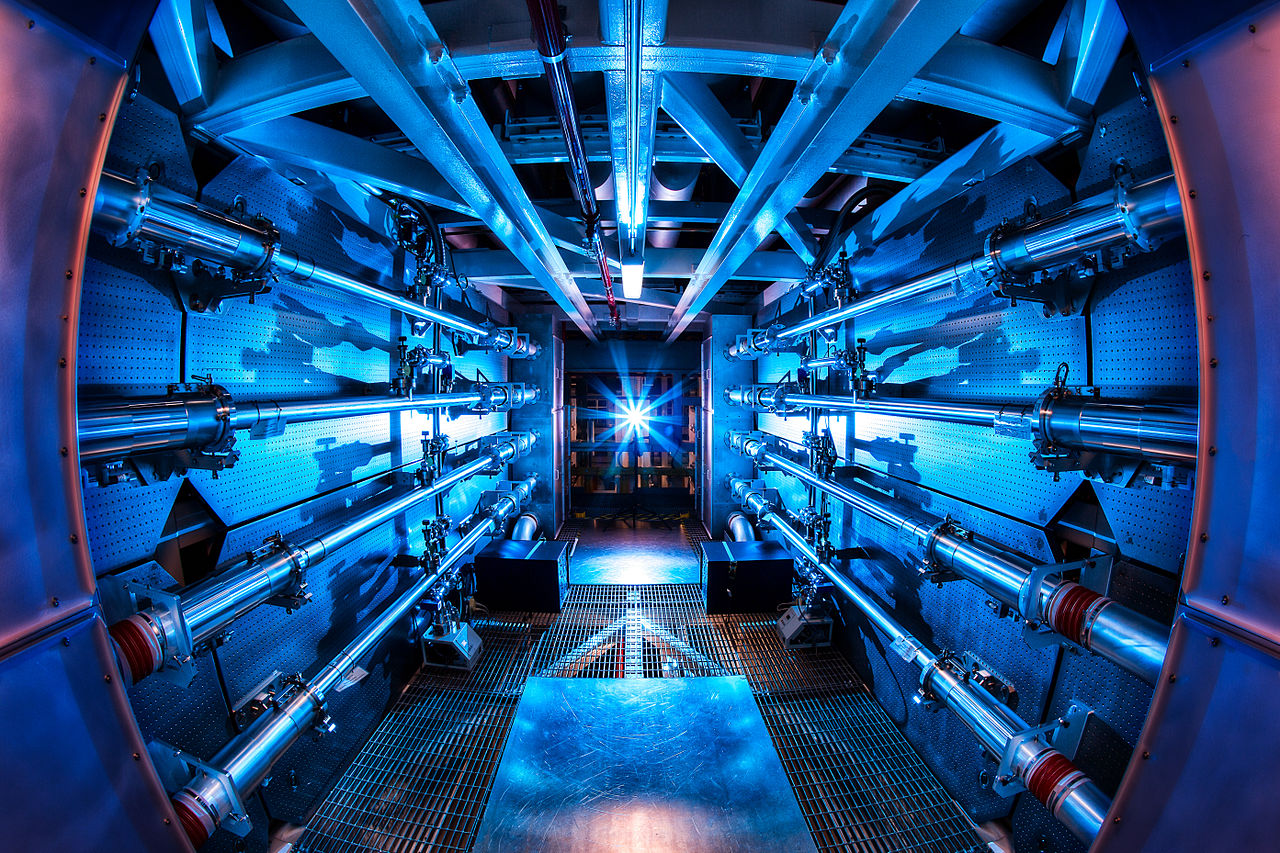

Dr. LeFevre is an NSF ASCEND postdoctoral fellow at the University of Michigan studying laboratory astrophysics problems relating to the radiation hydrodynamics of shocks and heat fronts using facilities such as the Omega-60 laser, the Omega-EP laser, the Z-Facility, and the National Ignition Facility.

Douglass Schumacher

Member

Douglass Schumacher is a Professor of Physics at The Ohio State University. He has studied intense laser plasma interactions for thirty years and directs the Scarlet Laser Facility, a sister LaserNetUS facility. Prof. Schumacher is the current Chair of LaserNetUS.

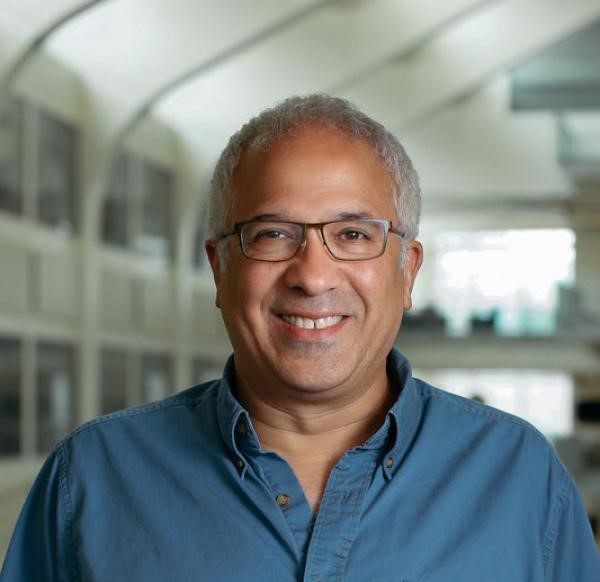

Bedros Afeyan

Member

Dr. Bedros Afeyan received his MS and Ph.D. from the University of Rochester in Theoretical Plasma Physics. He worked at the University of Maryland, Lawrence Livermore National Laboratory, and UC Davis-Livermore before forming Polymath Research. His work is focused on the Nonlinear Optics of Plasmas, Statistical methods, kinetic theory, wavelets and multi-resolution analysis, photonics, Wigner optics, and laser fusion research.

Jason Clapp

Student Chapter President

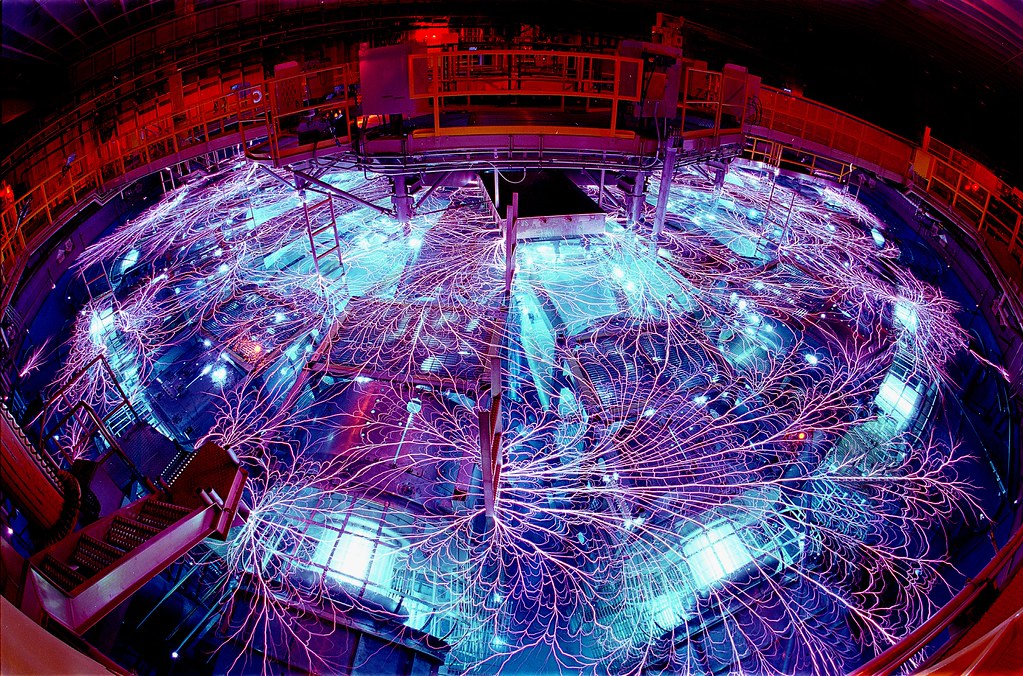

Jason Clapp is a fifth-year graduate student working with Dr. Roberto Mancini in the field of plasma spectroscopy. The research project involves tracer spectroscopy in Magnetized Liner Inertial Fusion (MagLIF) experiments at the Z Pulsed power driver from Sandia National Laboratories.

HEDSA Student Chapter

The Student Chapter of US students in the field

of High Energy Density Physics.